Interactive Script Framework

Preview

Scripts is a preview feature. It is not included in General Availability SLA.

How to create interactive annotation automations with Userscripts.

Userscripts are JavaScript (JS) for Annotation Automation.

Nearly any valid JavaScript you can include in userscripts.

You can also use any other language, like python, via API calls.

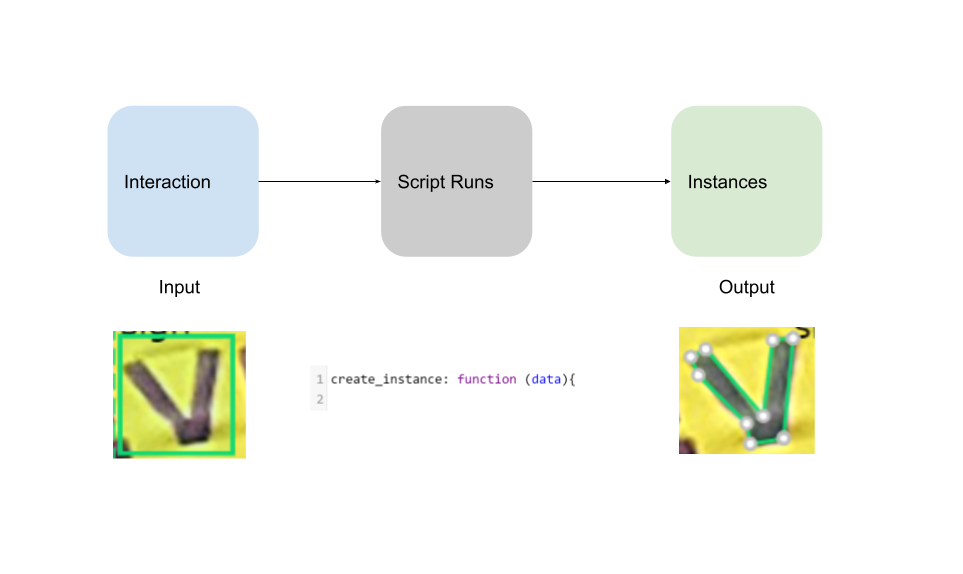

Interaction -> Scripts -> Instances

The high level idea is that we can build custom functions inside Diffgram.

The primary classes of use cases are:

- Interactive automations, such as running models

- Modifying the UI to suite your preference, such as programmatically changing settings

- General system interactions, such as warning on duplicates

Many of these are based around user interactions where a trigger is some interaction, such as creating a new instance. Usually this is then converted into something the user can interact with again in Diffgram. (Such as editing a polygon or adding attributes).

Each interaction event includes the event information, such as the new instance created. Your script will then do something, such as running a machine learning model, modifying the UI, calling an API, or anything you want.

For example, (in the script) you can choose to create instances. Imagine you choose to run a segmentation model. You may then use opencv to process the segmentation into polygon points, and pass that to Diffgram to have user interactable instances.

Example - Box to Polygon

In this example the user drew a box around the the "V". The box is shown in green. A script had registered on the create_instance key. It ran a grabcut algorithm and outputted the polygon instance. the polygon is now user interactable.

Examples

Selected Examples:

Bodypix (People Segmentation & Keypoints)

Box to Polygon (Using GrabCut)

Duplicate Detection Example

Example - Person Detection for Different Attribute

Here, we used the BodyPix example in a diffgram userscript. It ran on the whole image and segmented all the people. Here we now select the person to add our own attribute "On Phone?". This is an example of using a model that's not directly our training data goal to avoid having to "recreate the wheel" for parts that are well understood. You can imagine swapping this model for other popular ones, or using your own models for the first pass here.

Free, Fast, and Customizable

Learn more Why Userscripts

Updated over 3 years ago