Upload Files By Reference

Avoid ingesting raw data bytes by just providing blob paths and bucket references using Diffgram Connections.

Uploading any type of data is slow. Images can be multiple MB each and videos or Geotiff can grow to be several GB. When you start working with thousands or millions of files, the ingestion process can become really slow.

Upload By Reference is Only Supported for Images

New releases will add support for videos, text files audio, etc.

On top of this you can have more compute, and network transfer costs for the processing of these blobs.

Uploading By Reference

Thanks to the Connections Concepts of Diffgram you can just define a connection to your cloud provider. Diffgram handles the creation of files without the actual data transfer. That way your data stays safe on your original buckets and Diffgram just serves as an orchestrator of these blobs for training data purposes.

Let's see how we can upload a File using connections:

Supported Providers

Azure (Microsoft)

Amazon Web Services (AWS)

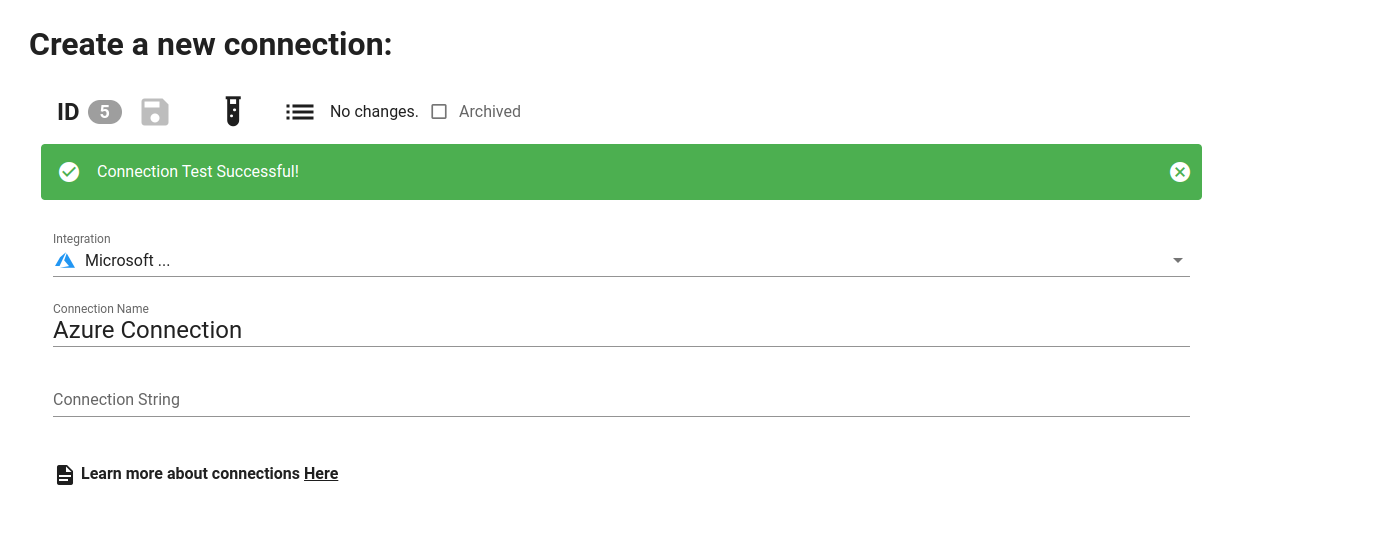

1. Create Connnection

Go to the connections section and create a new Azure or AWS Connection. Set your credentials and click the test button to ensure the connection was successfully created.

Make note of the Connection ID (in this case 5). Since you will use it later in this process.

2. Use the Python SDK to Access your Project

Click on the "Share" button on the top bar of the Diffgram UI and generate your project credentials. Then install the Python SDK and place the code snippet on a Python file.

It should look similar to this:

project = Project(

project_string_id = "myproject",

client_id = "my_id",

client_secret = "my_secret"

)

3. Upload a File Using the Connection ID

Now you only need to call the method project.file.from_blob_path() and provide a blob path, bucket/container and the connection ID.

project.file.from_blob_path(blob_path='myfile 1.jpg', bucket_name = 'my_bucket', connection_id=5, file_name='my file.jpg')

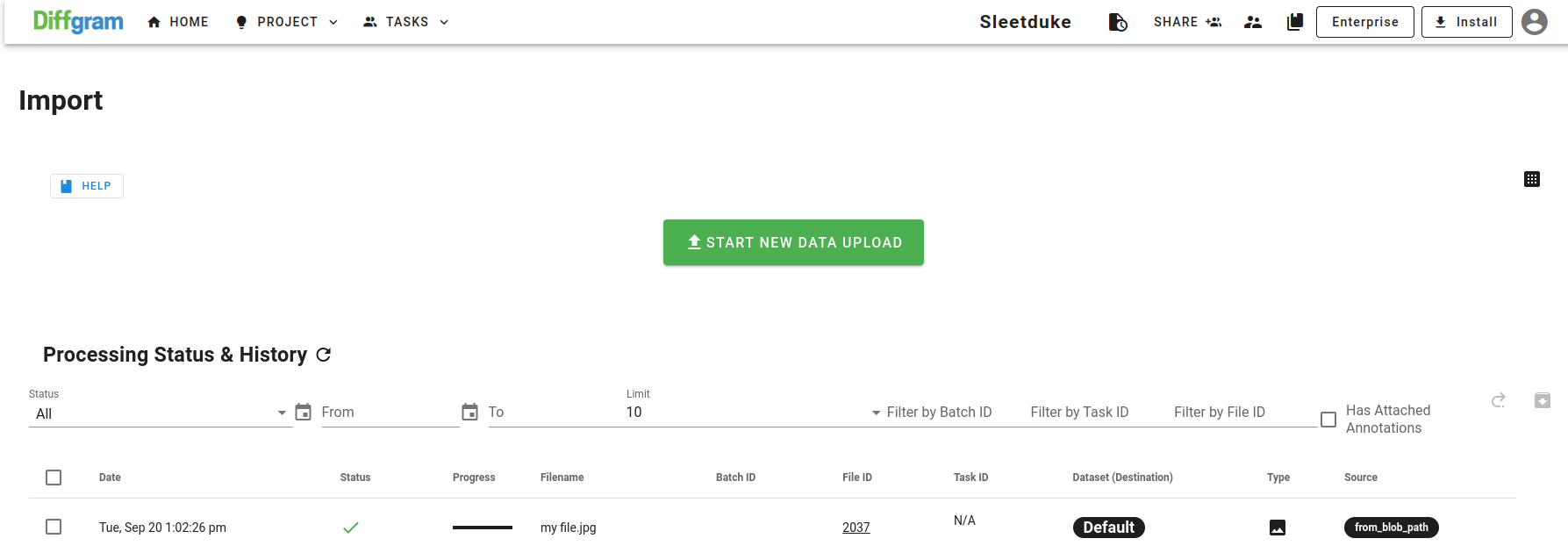

You should see the file upload success on the import section. Note that here we did upload any data into the Diffgram blob storage. We are instead just referencing the file and accessing it using the credentials on the Connection ID created on the project.

Updated over 3 years ago