Introduction

A Signed URL is like the "view with link" in a web based document editor.

It creates a link with a password inside the link in order to share the resource. (A resource could be a video file)

Additionally it's a common pattern to include a time decay.

We recommend at least 30 days as the expiry. While in general media will be processed into Diffgram within a few hours, in the odd case of wishing to re-import the media, it's cleaner to have the URL available vs having to delete the files and re-import from scratch.

Generate Signed URL from Cloud Storage

Signed URLs allow secure access to a resource. Usually they are time decayed, meaning they will expired after some period of time.

Docs from popular cloud providers:

The generate_signed_url function is an easy way to do this see an example below.

All providers supported

All we are looking for is an accessible url to the resource. This means that virtually any type of cloud provider and your own bare metal servers are supported.

The "signed" part is a pattern to secure access. This is similar to the "view with link" in say a doc editor, but more secure because of the time decayed access.

Google IAM Tips

Please refer to google cloud IAM documentation for generating a service account.

As an unofficial suggestion we have found these permissions are generally what are required. Note google may change these roles without notice!:

storage.buckets.get

storage.objects.create

storage.objects.delete

storage.objects.get

storage.objects.list

OR

Role of: 'Storage Admin'

Note google makes a difference between "admin" and "object admin".

Send from defined prefix

# pip install google-cloud-storage

# pip install diffgram

from google.cloud import storage

import time

import os

from diffgram import Project

"""

Docs https://googleapis.github.io/google-cloud-python/latest/storage/blobs.html?highlight=generate_signed_url#google.cloud.storage.blob.Blob.generate_signed_url

"""

project = Project(

project_string_id = "",

client_id = "",

client_secret = "")

# To get the service account .json

# see https://console.cloud.google.com/iam-admin/iam

# https://cloud.google.com/iam/docs/creating-managing-service-accounts

SERVICE_ACCOUNT = "..\..\shared\helpers\service_account.json"

# Storage bucket name

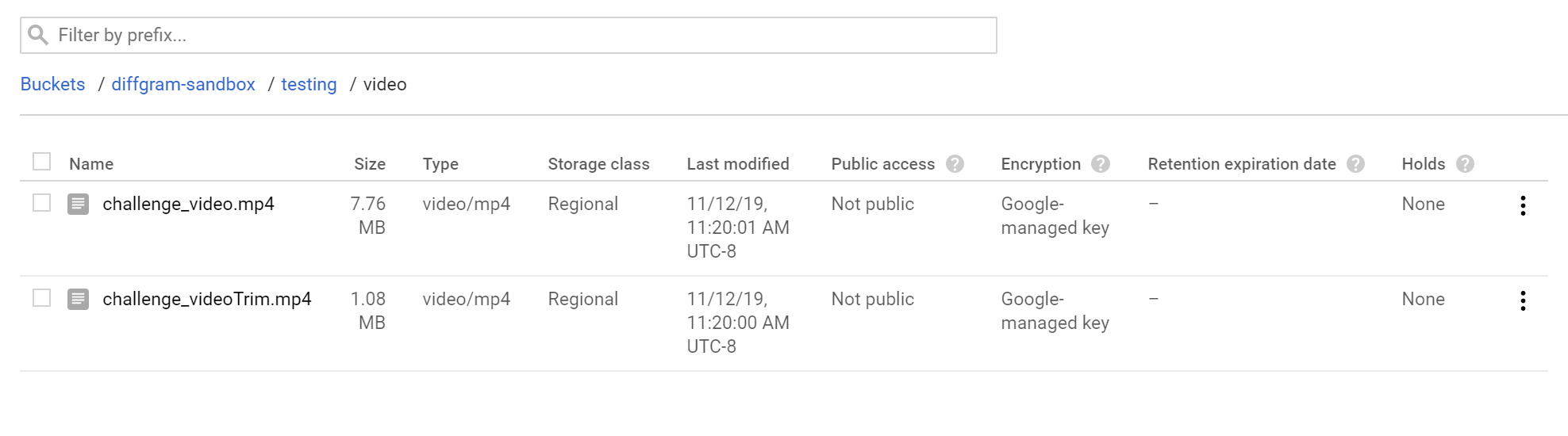

CLOUD_STORAGE_BUCKET = "your-bucket"

def get_gcs_service_account(gcs):

path = os.path.dirname(os.path.realpath(__file__)) + "/" + SERVICE_ACCOUNT

return gcs.from_service_account_json(path)

gcs = storage.Client()

gcs = get_gcs_service_account(gcs)

prefix = "something/"

# Ref: https://googleapis.dev/python/storage/latest/client.html#google.cloud.storage.client.Client.list_blobs

blobs = gcs.list_blobs(CLOUD_STORAGE_BUCKET,

prefix=prefix)

# Note now creating new job here

job = project.job.new(

name = "Example Job" + str(time.time()),

instance_type = "box",

share = "project",

job_type = "Normal")

blob_expiry = int(time.time() + (60 * 60 * 24 * 30))

for blob in blobs:

print(blob.name)

if blob.name == prefix: continue # exclude root

signed_url = blob.generate_signed_url(expiration=blob_expiry)

result = project.file.from_url(

signed_url,

media_type="video",

job = job

)

time.sleep(1)